The phrase “ElastiCache vs Redis” turns up constantly in AWS architecture discussions — on Slack, in pull requests, in design docs, in engineering all-hands meetings — and it’s almost always used as if the two are direct, equivalent alternatives. They’re not, quite. The comparison is real and worth making carefully, but it only makes sense once you understand what ElastiCache actually is, what Redis actually is, and why so many people conflate them in the first place.

Here’s the short version: ElastiCache is a managed caching service. Redis is an open-source data structure store. ElastiCache can run Redis under the hood — and usually does. So when someone says “ElastiCache vs Redis,” what they almost always mean is: should I use Amazon’s managed Redis offering, or run Redis myself on EC2? Or perhaps: should I use a third-party managed Redis service like Redis Cloud instead?

That’s the question this guide actually answers. We’ll cover what ElastiCache is, how it compares to self-managed Redis across every dimension that matters — performance, cost, operational complexity, scalability, security, and use case fit — and when each approach clearly wins. We’ll also address the Redis Cloud option, which has grown into a serious contender for specific workload types.

By the end, you’ll have a practical, engineering-grounded framework for making the ElastiCache vs Redis decision for your own stack — without oversimplifying a choice that can meaningfully shape your infrastructure’s reliability and your team’s operational burden for years.

First: What ElastiCache Actually Is (and Why People Get Confused)

Before you can evaluate ElastiCache vs Redis meaningfully, you need to understand what each term actually refers to — because the confusion here is structural, not just semantic.

Amazon ElastiCache is a fully managed in-memory caching service offered by AWS. It supports two underlying engines: Redis and Memcached. The key word is “managed” — ElastiCache doesn’t replace Redis or reinvent it. It wraps it. When you spin up an ElastiCache for Redis cluster, you’re running the actual open-source Redis engine on AWS-managed infrastructure. What ElastiCache adds is everything around the engine: automated provisioning, patch management, multi-AZ failover, backup and restore to S3, CloudWatch monitoring integration, VPC networking, IAM-based access control, and KMS-based encryption at rest.

Redis (Remote Dictionary Server) is an open-source, in-memory data structure store created by Salvatore Sanfilippo and first released in 2009. It supports strings, hashes, lists, sets, sorted sets, bitmaps, HyperLogLog, geospatial indexes, and streams. It can function as a cache, a message broker, a session store, a real-time leaderboard engine, a pub/sub system, and more. Redis is renowned for its performance — sub-millisecond read and write latencies at very high throughput — and its simplicity of operation for basic use cases.

The confusion arises because “ElastiCache for Redis” and “self-managed Redis” are both Redis under the hood. They share the same command set, the same data structures, the same protocol, and largely the same behavior. The difference between them is entirely about who manages the operational infrastructure — you, or AWS.

Since December 2023, AWS has also offered ElastiCache Serverless — a capacity-on-demand variant that scales automatically without requiring you to select node types or manage cluster sizing. This is a meaningful evolution for teams that have unpredictable or highly variable traffic patterns and don’t want to over-provision capacity.

There’s a third player worth acknowledging: Amazon MemoryDB for Redis, which is often conflated with ElastiCache but serves a different purpose. MemoryDB stores data durably in a distributed transaction log, making it suitable as a primary database rather than a pure cache. If you’re evaluating ElastiCache vs Redis for a use case where you need guaranteed durability without data loss on failover, MemoryDB is worth understanding separately — we’ll cover it briefly in the architecture section.

ElastiCache vs Redis: Setup and Operational Complexity

Operational complexity is the first and most consequential dimension to evaluate in the ElastiCache vs Redis comparison, because it shapes not just your infrastructure cost but your team’s day-to-day engineering burden.

Self-Managed Redis on EC2 :

Running Redis yourself on EC2 gives you complete control — and complete responsibility. At a minimum, a production-grade self-managed Redis deployment requires:

- Provisioning EC2 instances with appropriate sizing (memory, CPU, network throughput)

- Installing and configuring Redis, including redis.conf tuning for your specific workload

- Setting up replication — either Redis Sentinel for automatic failover in primary/replica topologies, or Redis Cluster for distributed sharding

- Configuring persistence settings (RDB snapshots and/or AOF logging) appropriate to your durability requirements

- Building a backup strategy and restore procedure — and actually testing it

- Setting up monitoring and alerting (typically integrating with CloudWatch, Datadog, or Prometheus)

- Establishing a patch and upgrade cadence — and handling the operational steps each upgrade requires

- Handling network security group rules, TLS configuration, and Redis AUTH setup manually

- Responding to incidents: node failures, replication lag, memory pressure, cluster splits

For a small team that runs one or two Redis clusters and has engineers with strong Redis expertise, this is manageable. For a larger organization running dozens of Redis clusters across multiple services, this operational surface area becomes a significant engineering investment — one that competes directly with product development time.

ElastiCache for Redis :

ElastiCache compresses almost all of the above into configuration choices rather than operational tasks. You select your node type, configure your replication group and shard count, set your maintenance window and backup retention period, enable Multi-AZ, and define your security settings. AWS handles provisioning, patching (within your defined maintenance window), failover, backup execution, and the underlying infrastructure health.

The tradeoff is configuration flexibility. ElastiCache doesn’t give you access to every Redis configuration parameter. There is a curated set of parameters you can modify through ElastiCache parameter groups, but some fine-grained redis.conf options aren’t exposed. For most production workloads, the available configuration surface is more than sufficient. For highly specialized use cases that require unusual Redis tuning, this can be a constraint.

The other tradeoff is cost — ElastiCache charges a premium over equivalent EC2 compute. That premium buys the managed service layer. Whether it’s worth it depends on how much your team’s time costs and how many clusters you’re running.

ElastiCache vs Redis Performance: The Real Numbers

Performance is often the first thing engineers want to benchmark, but in the ElastiCache vs Redis comparison, it’s also the least differentiating factor between the two options. Here’s why.

Latency and Throughput :

Both ElastiCache for Redis and self-managed Redis on equivalent hardware can deliver sub-millisecond latency for standard cache operations — GETs, SETs, sorted set operations, and most other Redis commands. This is an inherent property of in-memory storage and the Redis architecture, not a function of who manages the infrastructure. You won’t gain or lose meaningful latency by choosing ElastiCache over self-managed Redis if you’re running equivalent node types.

The latency you actually observe in production is determined primarily by network topology: how far network packets travel between your application tier and your Redis cluster. ElastiCache nodes run inside your VPC and can be co-located in the same Availability Zone as your application instances, minimizing round-trip time. Self-managed Redis on EC2 can achieve identical placement. Neither approach has an inherent network advantage over the other.

Where ElastiCache May Edge Ahead :

AWS has made engineering investments in ElastiCache’s network stack, particularly for high-throughput scenarios. Enhanced I/O multiplexing, available on newer ElastiCache node families, can improve throughput for workloads processing large numbers of concurrent connections by batching I/O operations more efficiently. For applications running tens of thousands of simultaneous Redis connections or executing millions of operations per second, this optimization may provide measurable benefit. For the vast majority of production workloads, it won’t be detectable.

ElastiCache also benefits from AWS’s continued investment in Graviton-based node types (cache.r7g and cache.m7g families), which often provide better price-performance than older Intel-based node generations — paralleling the Graviton advantage seen across the broader EC2 ecosystem.

The Honest Performance Verdict :

If raw Redis performance is your primary concern, ElastiCache and self-managed Redis on comparable hardware are essentially equivalent. Choose between them based on operational factors, not performance promises.

High Availability and Failover: Where ElastiCache Pulls Ahead Clearly

High availability is one of the most concrete, practical advantages of ElastiCache over self-managed Redis — and for production caches where downtime has direct user impact, it deserves careful consideration.

Self-Managed Redis HA :

High availability for self-managed Redis requires you to implement and maintain one of two approaches:

Redis Sentinel handles automatic failover for primary/replica topologies. Sentinel processes monitor your Redis instances, detect primary failures, and orchestrate promotion of a replica to primary. Sentinel is mature and well-documented, but setting it up correctly — with appropriate quorum configuration, proper bind addresses, and network-aware failover timeouts — takes operational expertise. Getting it wrong results in split-brain scenarios or delayed failover, which can mean minutes of cache unavailability.

Redis Cluster handles both sharding and built-in failover across multiple shards. Each shard has its own primary and replicas, and the cluster handles failover within each shard automatically. Cluster mode is powerful but requires cluster-aware client libraries and careful attention to multi-key operation constraints (all keys in a multi-key command must hash to the same slot).

Both approaches work well when implemented correctly by teams with Redis expertise. The operational burden is the ongoing maintenance, monitoring, and incident response — not the initial setup.

ElastiCache Multi-AZ Failover :

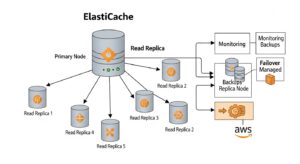

ElastiCache for Redis with Multi-AZ replication enabled handles failover automatically. When a primary node becomes unavailable, ElastiCache detects the failure and promotes a read replica to primary — typically within a few seconds to a couple of minutes, depending on cluster configuration and detection timing. No manual intervention is required. Your application reconnects to the same endpoint, and ElastiCache’s DNS update propagates the new primary’s address transparently.

This is one of the clearest operational wins for ElastiCache in the ElastiCache vs Redis comparison. For teams without dedicated infrastructure engineers who specialize in Redis — which is most teams — automated failover is a meaningful safety net. Production incidents caused by manual failover missteps are a real category of outage, and ElastiCache eliminates them for the most common failure scenarios.

The one nuance: ElastiCache failover is not instantaneous. There is a window during which your primary is unavailable and before the replica promotion completes. Applications need to handle connection errors gracefully during this window — which they should be doing regardless of whether you’re on ElastiCache or self-managed Redis. Connection retry logic and appropriate timeout settings are required either way.

Scalability: Horizontal and Vertical Growth :

Scalability considerations in the ElastiCache vs Redis comparison break into two categories: scaling to handle more data (horizontal, via sharding) and scaling to handle more throughput (both horizontal via replicas and vertical via larger node types).

Scaling Self-Managed Redis :

Vertical scaling on self-managed Redis involves stopping the instance, launching a larger EC2 instance, and restoring data — which typically requires downtime unless you’ve set up a replica to promote first. Horizontal scaling via Redis Cluster is more complex: adding shards requires resharding, which redistributes keyslots across the new topology. Redis’s CLUSTER REBALANCE command handles this, but it’s a non-trivial operation on a live cluster with significant data, and it requires careful monitoring to avoid performance impact during the resharding process.

Scaling ElastiCache :

ElastiCache supports online scaling for several operations. You can add read replicas to increase read throughput without downtime. You can change node types (vertical scaling) with ElastiCache triggering a failover to the new node type. In cluster mode, you can add shards online to increase write throughput and expand total dataset capacity — ElastiCache handles the keyslot redistribution automatically.

ElastiCache Serverless takes scalability further by removing the capacity planning question entirely. You define no node type and no shard count. ElastiCache Serverless monitors demand and scales compute and memory automatically. You pay for ElastiCache Processing Units (ECPUs) consumed and GB of data stored, rather than a fixed hourly node rate. At low or intermittent utilization, this can be more cost-effective than provisioned nodes. At sustained high utilization, provisioned reserved nodes typically cost less per unit of throughput. The operational simplicity of Serverless is the primary draw — it eliminates a class of capacity planning decisions that would otherwise require periodic attention as your workload grows.

For teams expecting rapid or unpredictable growth early-stage products, internal tools with spiky usage, or seasonal workloads — ElastiCache Serverless deserves serious consideration despite its higher per-unit cost at peak.

ElastiCache vs Redis Pricing: Where the Numbers Actually Land

Cost is frequently the primary argument for self-managed Redis, and it’s a real argument that deserves a rigorous examination — not a dismissal.

On-Demand Node Pricing :

ElastiCache node pricing is consistently higher than the equivalent EC2 instance type for the same hardware class. A cache.r7g.large in ElastiCache costs more per hour than an r7g.large EC2 instance on which you’d run Redis yourself. The delta varies by node type and region, but is typically in the range of 20–40% above raw EC2 compute cost. That delta is the managed service fee.

For small deployments — a single cache node, a simple primary/replica pair — the absolute dollar difference per month is modest, often in the range of $20–$80 per node depending on instance size and region. The argument for self-managed Redis on cost grounds is less compelling at small scale because the engineering time required to set up and maintain even a simple self-managed Redis deployment quickly exceeds the cost savings.

Reserved Node Pricing :

ElastiCache offers reserved node pricing with 1-year and 3-year commitments, significantly reducing on-demand rates. A 1-year reserved ElastiCache node typically costs 30–40% less than on-demand pricing. A 3-year commitment often reduces costs by 50–60% relative to on-demand, depending on node type and region. At committed scale with reserved pricing, the gap between ElastiCache and self-managed Redis narrows considerably — especially when you account for the EC2 cost plus the operational overhead honestly.

ElastiCache Serverless Pricing :

Serverless pricing is consumption-based: you pay per ECPU (ElastiCache Processing Unit) consumed and per GB of data stored per hour. There are no idle costs — if your cache receives no traffic, you pay only for data storage. This makes Serverless significantly more cost-effective than provisioned nodes for development environments, low-traffic internal tools, and workloads with traffic patterns that include long quiet periods.

At sustained high utilization — a production cache handling thousands of requests per second continuously — provisioned reserved nodes will typically be cheaper on a per-operation basis. The break-even point varies by workload, but for high-throughput production caches, it’s worth running the numbers on both options before defaulting to Serverless.

The Real Cost of Self-Managed Redis :

Any honest ElastiCache vs Redis cost comparison must include engineering time — and this is where many analyses fall short. Self-managed Redis doesn’t run itself. Someone needs to set up replication, configure Sentinel or Cluster, handle version upgrades (including validating behavioral changes and updating client libraries), build and test backup and restore procedures, configure monitoring and alerting, and respond to incidents.

A conservative estimate of even 4–8 hours per Redis cluster per month for ongoing maintenance — upgrades, incident response, monitoring review, occasional resharding — represents real labor cost. At a fully-loaded engineering cost of $100–$200/hour, a single self-managed Redis cluster that saves $50/month in compute costs compared to ElastiCache may be a net negative once labor is included.

The calculation shifts at scale: organizations running many self-managed Redis clusters with dedicated infrastructure teams can amortize the expertise across many clusters, making self-managed more economical. But the break-even point is higher than most cost analyses suggest when engineering time is honestly valued.

Redis Cloud vs ElastiCache: The Third Option You Should Know About :

Any thorough ElastiCache vs Redis guide needs to address Redis Cloud — the managed Redis service offered by Redis Ltd., the commercial company behind the open-source Redis project. It’s a serious option for specific use cases and deserves more than a footnote.

Redis Cloud runs on AWS infrastructure and is deployable in the same AWS regions as your ElastiCache clusters. In terms of latency and VPC integration, it can be placed comparably to ElastiCache. Where Redis Cloud differentiates itself is in feature depth: it supports the full Redis Enterprise feature set, which includes capabilities that ElastiCache for Redis simply doesn’t offer.

Active-active geo-replication allows Redis Cloud to synchronize data across multiple cloud regions with conflict-free replication, enabling true multi-region active-active architectures. This is architecturally complex to build yourself and not available in standard ElastiCache.

Redis modules — RedisSearch (full-text search), RedisJSON (native JSON document handling), RedisTimeSeries (time-series data), RedisGraph (graph database), and others — are fully supported in Redis Cloud Enterprise tiers. Standard ElastiCache for Redis does not support Redis modules. If your application needs to run full-text search queries directly against Redis, handle complex JSON document structures natively, or manage time-series data within your cache layer, Redis Cloud is often the most practical path.

Multi-cloud deployments — workloads that need to span AWS, GCP, and Azure — are supported by Redis Cloud in a way that managed services tied to a single cloud provider cannot match. If your infrastructure is deliberately multi-cloud, Redis Cloud’s cloud-agnostic management layer is a genuine advantage.

The premium for Redis Cloud over ElastiCache is real and meaningful. For purely AWS-native workloads that don’t need Redis modules, active-active geo-replication, or multi-cloud distribution, ElastiCache for Redis is typically the more cost-efficient choice. Redis Cloud earns its premium when you genuinely need what it uniquely offers.

ElastiCache vs Redis Use Cases: Matching the Tool to the Job

Session Storage :

Session caching is one of Redis’s most common use cases, and both ElastiCache and self-managed Redis handle it well. The practical choice depends on your team’s operational capacity. ElastiCache is the lower-friction path for AWS-native applications — VPC integration, IAM-based access control, and automated failover mean you spend almost no operational time on your session cache infrastructure. Self-managed Redis works equally well if your team has Redis expertise and the bandwidth to maintain it.

One specific consideration for session storage: Multi-AZ failover with ElastiCache means that if a primary node fails, sessions can continue to be served from the promoted replica with minimal disruption. With self-managed Redis and Sentinel, the failover window is typically longer and requires more careful tuning to minimize session loss.

Database Query Caching :

Reducing database load by caching frequent query results is a pattern that benefits enormously from low-latency, high-throughput caching — exactly what Redis excels at. For AWS-native applications, ElastiCache for Redis is a natural fit: it sits inside your VPC, close to your application tier and your RDS instances, and handles failover without manual intervention. The most common pattern is reading from the cache first, falling through to the database on a miss, and writing results back to cache — ElastiCache handles this pattern seamlessly with no configuration changes beyond connecting your application.

For teams already using Amazon RDS or Aurora, pairing with ElastiCache for Redis is a well-documented, well-supported architecture with clear AWS documentation and established client library patterns.

Real-Time Leaderboards, Counters, and Rate Limiting :

Redis’s sorted sets, HyperLogLog, and atomic increment operations make it an outstanding engine for real-time leaderboards, vote counts, rate limiters, and counters. These data structures are fully supported in ElastiCache for Redis — you don’t need a self-managed Redis instance to use sorted sets or atomic INCR operations. Self-managed Redis supports the same capabilities with no functional difference.

The choice here comes down entirely to operational preference, not capability. Both options can serve a leaderboard at sub-millisecond latency. If your team is already using ElastiCache for session storage and query caching, using it for leaderboards adds no new infrastructure complexity. If you’re greenfielding and want full configuration control, self-managed works equally well.

Pub/Sub and Lightweight Messaging :

Redis’s publish/subscribe model provides a simple, effective mechanism for broadcasting messages between application components. ElastiCache for Redis supports Pub/Sub natively. However, it’s important to understand the durability model: Redis Pub/Sub messages are not persisted. If a subscriber is offline when a message is published, it’s lost. For production pub/sub workloads where message durability matters, Redis Streams (also supported in ElastiCache) or AWS-native messaging services like Amazon SQS or SNS may be more appropriate.

Redis Streams via ElastiCache is worth examining for workloads that need ordered, persistent, consumer-group-aware message processing within the same cache infrastructure — it combines the low-latency characteristics of Redis with durability semantics closer to a message queue.

Machine Learning Feature Stores :

In-memory feature stores for ML inference require very low read latency at high throughput — the inference pipeline reads feature values for each prediction, often in parallel, and any latency in feature retrieval directly adds to inference response time. ElastiCache for Redis is well-suited to this role, particularly for applications already deployed on AWS where the VPC integration and CloudWatch observability are valuable.

ElastiCache Serverless is especially worth considering for inference-serving feature stores, because inference traffic is often bursty — high during peak usage periods, low overnight — and Serverless eliminates the capacity headroom you’d otherwise need to provision for peak load.

Workloads Needing Redis Modules :

If your application needs RedisSearch for full-text search, RedisJSON for native JSON document storage and querying, or RedisTimeSeries for time-series data management, standard ElastiCache for Redis is not the right choice — it doesn’t support Redis modules. Your options are:

- Self-managed Redis on EC2 with modules installed (full control, full operational burden)

- Amazon MemoryDB for Redis (supports some modules, provides durable storage)

- Redis Cloud (full Redis Enterprise module catalog, higher cost)

For this specific requirement, the ElastiCache vs Redis decision is made for you: if you need modules, you’re looking at one of the alternatives above.

ElastiCache vs Redis Architecture: Clustering and Topology

ElastiCache Cluster Mode Disabled (Single Shard)

The simplest ElastiCache topology: one primary node with up to five read replicas, all serving the same dataset. This is the right choice for datasets that fit comfortably on a single node’s memory and for workloads where the primary constraint is read throughput rather than write throughput or total dataset size. Adding read replicas scales your read capacity linearly — each replica can independently serve read traffic.

ElastiCache Cluster Mode Enabled (Multiple Shards)

For datasets too large for a single node, or for workloads that require higher write throughput than a single primary can provide, cluster mode distributes data across multiple shards — up to 500 shards in ElastiCache’s maximum supported configuration. Each shard has its own primary and replicas. ElastiCache manages the keyslot distribution and handles shard failover automatically.

Cluster mode requires your Redis client library to support the Redis Cluster protocol, which all major modern clients do. The one operational consideration is multi-key commands: in a clustered topology, all keys referenced in a single command (MGET, MSET, pipeline) must hash to the same keyslot, or the command will fail. Using hash tags to force co-location of related keys is the standard pattern for managing this constraint.

Self-Managed Redis Cluster :

A self-managed Redis Cluster provides equivalent sharding and failover capabilities. You configure the cluster topology, manage resharding when you need to add or remove shards, and monitor cluster health. The operational complexity is higher than ElastiCache cluster mode, but the configuration flexibility is also higher — you control every aspect of the cluster layout.

Amazon MemoryDB for Redis vs ElastiCache :

A note on MemoryDB because it’s frequently confused with ElastiCache in the ElastiCache vs Redis discussion: MemoryDB for Redis stores all data durably in a multi-AZ distributed transaction log, making it suitable as a primary database rather than a cache. You don’t risk data loss on failover or node replacement. ElastiCache, by contrast, is optimized for caching scenarios where the authoritative data lives in a durable database and the cache is an acceleration layer — losing cached data on failure is acceptable because you can repopulate from the source of truth. Choose MemoryDB when durability is a hard requirement; choose ElastiCache when performance and cost are the primary drivers and some data loss on rare failure events is acceptable.

Security and Compliance in ElastiCache vs Redis :

For regulated industries or security-sensitive environments, the ElastiCache vs Redis comparison includes a security and compliance dimension that’s easy to overlook but practically important.

ElastiCache Security Capabilities :

ElastiCache for Redis provides a comprehensive security stack that integrates natively with AWS’s security services:

- Encryption at rest via AWS KMS, with customer-managed key support

- Encryption in transit via TLS, enforced at the cluster level

- VPC isolation — ElastiCache clusters run inside your VPC with no public internet exposure by default

- IAM authentication (supported on newer ElastiCache configurations) allowing you to use IAM roles and policies to control Redis access

- Redis AUTH tokens for legacy authentication compatibility

- Audit logging via CloudTrail for control-plane operations

For organizations pursuing SOC 2 Type II, HIPAA, or PCI-DSS compliance, AWS’s managed services — including ElastiCache — benefit from AWS’s existing compliance certifications and audit infrastructure. Your compliance auditor can review ElastiCache’s security controls within the same AWS compliance framework that covers your other AWS services.

Self-Managed Redis Security :

Self-managed Redis on EC2 supports the same underlying security capabilities — TLS, Redis AUTH, VPC network isolation — but each layer requires independent configuration, maintenance, and audit documentation. TLS certificate management, key rotation, and network security group rules are all your responsibility. Redis doesn’t come with encryption at rest by default; you need to configure EBS encryption at the volume level and manage that separately.

For compliance purposes, self-managed infrastructure requires you to document and evidence your own security controls rather than relying on AWS’s shared responsibility documentation. This is achievable but represents additional work, particularly during initial audit cycles.

Migrating from Self-Managed Redis to ElastiCache :

If you’re currently running self-managed Redis on EC2 and evaluating a move to ElastiCache, the migration process is straightforward mechanically — but deserves careful planning.

Option 1: Live Replication Cutover

The cleanest migration path uses Redis’s built-in replication. Configure your existing self-managed Redis instance as a replica source and your new ElastiCache cluster as a replica. Allow replication to synchronize fully, then validate data integrity. At your chosen cutover moment, update your application’s Redis connection string to point at the ElastiCache endpoint and disable the replication relationship. This approach achieves near-zero downtime because your application traffic switches to a fully-synchronized ElastiCache cluster.

After cutover, allow your existing self-managed instance to run for a validation period — typically 24–48 hours — before decommissioning it, in case you need to roll back.

Option 2: Snapshot Import

AWS supports importing an existing Redis RDB (dump.rdb) snapshot directly into ElastiCache during cluster creation. Upload your snapshot to S3, specify it as the seed data source when creating your ElastiCache cluster, and the cluster will restore from the snapshot. This is a point-in-time migration — you’ll need to either accept the data gap between snapshot time and cutover, or replay any writes that occurred after the snapshot using a migration queue.

Application-Side Changes :

Application-side changes are minimal in most migrations. Typically, you update one configuration value: the Redis connection string. If you’re migrating from a non-clustered Redis to ElastiCache in cluster mode, you’ll also need to ensure your client library has Redis Cluster support enabled and review any multi-key operations for keyslot co-location requirements.

Testing your application against the ElastiCache endpoint in a staging environment before production cutover catches the vast majority of compatibility issues. The full migration is typically a one-sprint project for a containerized application, longer for monolithic applications with more complex dependency structures.

Decision Framework: ElastiCache vs Redis

After examining every dimension of the ElastiCache vs Redis comparison, the decision framework is cleaner than most architecture decisions.

Choose ElastiCache for Redis when:

- You want AWS to own patching, failover, and backup operations

- Your production workload requires high availability and your team can’t afford to build and maintain a Sentinel or Cluster setup themselves

- Deep AWS integration — IAM, KMS, CloudWatch, VPC — is important for your security or compliance posture

- You’re building new infrastructure on AWS and want to minimize cross-service operational friction

- Your team doesn’t have dedicated Redis operations expertise

- You want Serverless scaling for variable or unpredictable traffic

- You’re running many services and want consistent, managed caching infrastructure across them

Choose self-managed Redis on EC2 when:

- You need Redis modules (RedisSearch, RedisJSON, RedisTimeSeries) that ElastiCache doesn’t support

- You need full redis.conf configuration control for highly specific performance tuning

- You’re running a multi-cloud or hybrid on-premises/cloud architecture where a single-cloud managed service creates lock-in

- Your team has strong Redis operational expertise and the bandwidth to apply it

- You’re cost-optimizing aggressively at very large scale where the engineering investment in self-management is justified

- You need a Redis version not yet supported by ElastiCache’s release track

Choose Redis Cloud when:

- You need active-active geo-replication across multiple regions or cloud providers

- You need the full Redis Enterprise module catalog

- Your infrastructure spans multiple cloud providers and you need consistent Redis management across all of them

Frequently Asked Questions

1. Is ElastiCache the same as Redis?

No. ElastiCache is a managed AWS service that uses Redis as an engine, but AWS handles operations like maintenance and scaling.

2. Is ElastiCache for Redis more expensive than self-managed Redis on EC2?

Per node, yes. But when you include engineering and maintenance costs, it can be cost-neutral or even cheaper.

3. Can I use Redis modules with ElastiCache?

No. Standard ElastiCache does not support Redis modules like RedisJSON or RedisSearch.

4. What is ElastiCache Serverless and when should I use it?

It’s an auto-scaling version of ElastiCache. Best for unpredictable or variable workloads.

5. How does ElastiCache handle failover?

It automatically promotes a replica to primary within seconds to a couple of minutes, with no manual work needed.

Conclusion :

The ElastiCache vs Redis decision is ultimately a question of operational philosophy as much as it is a technical comparison. Both options run the same underlying Redis engine. Both can deliver sub-millisecond latency. Both support the full Redis data structure and command set for standard use cases. The difference is in who owns the operational complexity — and how much that ownership costs in both money and engineering time.

For most AWS-native teams, ElastiCache for Redis is the clear default. It removes a meaningful slice of operational work — provisioning, patching, failover, and backup — and integrates seamlessly with the security, monitoring, and networking infrastructure your team is already using on AWS. The per-node cost premium is real, but it is frequently justified — especially once engineering time is honestly included in the cost comparison.

With the right implementation strategy, partners like GoCloud can further simplify the transition by handling migration, optimization, and cost management ensuring your ElastiCache deployment is both efficient and scalable from day one.