How to Migrate Mainframe to AWS | The 2026 Complete Guide

The pressure to migrate mainframe to AWS has never been more acute. COBOL contractor rates in North America now exceed $250 per hour, 71% of mainframe teams are understaffed, and organizations running IBM z14, z15, or z16 systems on per-MIPS licensing models are watching their infrastructure bills compound at 15–20% annually. Meanwhile, AWS has assembled the most comprehensive mainframe modernization toolchain in the industry — spanning automated COBOL refactoring, agentic AI-powered code analysis, DB2 database migration, and JCL batch job replacement — and named a Leader in the ISG Provider Lens Mainframe Application Modernization Software 2025 report.

This guide provides a technically rigorous, phase-by-phase framework for enterprise architects, IT directors, and legacy systems engineers navigating an IBM mainframe to AWS migration in 2026.

The Mainframe Crisis: Why Organizations Are Migrating to AWS Now

The Talent Shortage Problem — Where Have All the COBOL Developers Gone?

The mainframe skills crisis is no longer a forecast — it has arrived. According to Franklin Skills’ 2025 research:

- 71% of mainframe teams are currently understaffed

- 93% of organizations rate finding qualified mainframe talent as “moderately to extremely difficult”

- 54% of mainframe teams report being underfunded relative to business requirements

- 91% of organizations plan to hire mainframe system administrators or COBOL developers within one to two years — yet the pipeline of qualified candidates is structurally shrinking

The average age of an experienced COBOL developer is now over 55. University computer science programs stopped teaching COBOL decades ago. The result is a talent arbitrage problem: organizations competing for a diminishing pool of specialists, driving COBOL contractor rates above $250/hour — rates that compound the already-substantial operational cost of the platforms themselves.

Mainframe Operating Costs Are Exploding — The Real Numbers

IBM mainframe software licensing operates on a usage-based model measured in MIPS (Millions of Instructions Per Second) or its modern equivalent MSU (Million Service Units). The economics are severe:

- Large mainframes running >11,000 MIPS incur software licensing costs of $1,000–$2,000 per MIPS annually — meaning an 11,000-MIPS workload carries a software licensing bill of $11M–$22M per year, before hardware, facilities, or staffing costs

- Average per-MIPS costs in 2025 are estimated at approximately $20,340 when all-in operational costs are included (Elnion, 2025)

- Hardware refresh cycles for IBM z16 systems are 5–7 years and carry eight-figure capital expenditures

- Data centre costs (power, cooling, floor space) for mainframe environments typically represent 20–30% of the total cost of ownership (TCO)

A 2025 EPAM analysis confirmed that comparable AWS infrastructure can deliver approximately 90% in cost savings compared to an equivalent 11,000-MIPS mainframe environment — the most frequently cited benchmark in the industry.

End-of-Life Mainframe Platforms Driving Urgency

IBM has end-of-marketed and end-of-service timelines for older z/OS versions and associated middleware stacks. Organisations still running z/OS 2.4 or earlier face both support cost escalation and security exposure as unpatched vulnerabilities accumulate. For US federal agencies, the picture is starker: the federal government spends an estimated $2.4 billion annually on legacy mainframe systems, and AWS analysis estimates that systematic migration could save $1 billion by 2030.

What Does It Mean to Migrate a Mainframe to AWS?

Featured Snippet Definition: To migrate a mainframe to AWS means systematically moving IBM z/OS workloads — including COBOL applications, JCL batch jobs, CICS transaction programs, IMS or DB2 databases, and VSAM file stores — off physical mainframe hardware onto AWS cloud services such as EC2, Lambda, Aurora, S3, and Step Functions, using strategies ranging from emulation-based rehosting to fully automated COBOL-to-Java refactoring via tools like AWS Blu Age and AWS Transform.

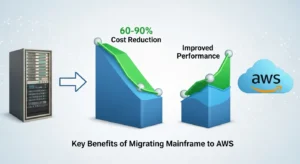

Key Benefits of Migrating Mainframe to AWS

60–90% Cost Reduction — The Financial Case

The financial case for mainframe to AWS migration is the strongest it has ever been:

- 90% cost reduction achieved by refactoring to AWS (Jonas Fitness, cited by EPAM/Medium)

- 57% cost savings reported by a leading employee benefits company after replatforming mainframe applications to cloud

- Average annualized savings of at least $23.3 million post-migration (EPAM Mainframe Modernization ROI analysis, 2025)

- Average modernization project cost dropped from $9.1M (2024) to $7.2M (2025), while ROI more than doubled year-over-year (Kyndryl 2025 State of Mainframe Modernization Survey)

The shift from CAPEX (hardware purchase + multi-year software licences) to OPEX (pay-per-use EC2 + Aurora + Lambda) fundamentally transforms the IT finance model. Reserved Instances and Savings Plans on AWS further reduce steady-state compute costs by 40–72% versus on-demand pricing.

2x–3x Better Performance vs. Legacy Mainframe

AWS Graviton3 and Graviton4 processors deliver compelling compute density for workloads that were previously pinned to vertical mainframe scaling. Applications refactored from COBOL to Java Spring Boot and deployed on EC2 Auto Scaling groups routinely outperform their mainframe equivalents on per-transaction throughput benchmarks — while enabling horizontal scaling that MIPS-based pricing actively penalises.

Access to Modern DevOps, AI/ML, and Analytics

Perhaps the most strategically significant benefit of mainframe migration is unlocking access to AWS’s broader ecosystem: CI/CD pipelines via CodePipeline and CodeBuild, ML inference via Amazon SageMaker, real-time analytics via Amazon Kinesis and Amazon Redshift, and — critically in 2026 — generative and agentic AI capabilities via Amazon Bedrock. None of these integration points are practical in a mainframe-native architecture.

Eliminating the Mainframe Skill Gap

Once COBOL applications are refactored to Java or .NET, the resulting codebase is maintainable by the global pool of Java and .NET developers — dramatically expanding the talent market available to the organisation. AWS Transform’s agentic AI can also generate comprehensive technical documentation for undocumented legacy code, directly addressing the institutional knowledge loss that accelerates as senior COBOL developers retire.

Improved Agility and Innovation Speed

Mainframe release cycles are measured in months; cloud deployment cycles are measured in minutes. AWS CodePipeline with automated regression testing enables continuous delivery patterns that are structurally impossible on z/OS production systems, where change management processes are necessarily conservative given the monolithic, mission-critical nature of the environment.

Industry Data — Companies Saving 60–80% After Mainframe Migration

- Fitness Software Company (Jonas Fitness): 90% cost reduction post-refactoring to AWS

- Employee Benefits Enterprise: 57% cost savings via replatforming

- Financial Services Firm (NTT DATA): Mainframe MIPS consumption reduced by one-third

- Kyndryl 2025 Survey: Average modernization ROI more than doubled year-over-year

- EPAM Analysis: 90% savings potential vs. 11,000-MIPS mainframe

- US Federal Government: Projected $1B in savings by 2030 through mainframe migration

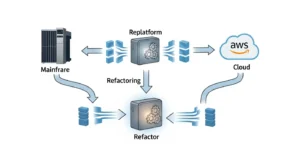

Mainframe to AWS Migration Strategies — Choose Your Approach

Not every mainframe application warrants the same modernization motion. A disciplined strategy selection process — applied per application, not per programme — is the single most important architectural decision in any mainframe migration programme.

| Strategy | What It Means | Effort | Risk | Cost | Timeline | Best For |

| Rehost (Lift & Shift) | Emulate z/OS environment on AWS using Micro Focus (OpenText) | Low–Medium | Low | Medium | 3–9 months | Mission-critical apps with immovable deadlines; as interim step |

| Replatform | Move COBOL/JCL to managed AWS services with minimal code changes | Medium | Medium | Medium | 6–18 months | Batch-heavy workloads; DB2 → Aurora migrations |

| Refactor (Re-architect) | Convert COBOL to Java/Spring Boot; JCL to Step Functions; DB2 to Aurora | High | High | High upfront; max savings | 12–36 months | Core banking, insurance, ERP systems with long service life |

| Replace | Retire custom mainframe apps in favour of SaaS equivalents | Low | Low–Medium | Low migration; new SaaS OPEX | 3–12 months | HR, CRM, reporting workloads with commercial SaaS equivalents |

Rehost (Lift & Shift) — Emulate Mainframe on AWS

Rehosting uses Micro Focus Enterprise Server (now OpenText) or compatible runtimes to emulate the COBOL and JCL execution environment on Amazon EC2 without modifying the source code. The z/OS application runs as compiled native binaries on Linux-based EC2 instances, providing a validated execution environment with minimal application risk. This approach is not the end state — it is a derisking first step that eliminates per-MIPS software licensing costs immediately while preserving application functionality.

AWS service mapping for rehost: COBOL binary → EC2 (x86 or Arm); DB2 → Amazon RDS for PostgreSQL (initial); VSAM → in-memory VSAM emulation or S3-backed abstraction layer; JES2/JES3 job scheduling → BMC Workload Automation or UC4 on EC2.

Replatform — Move COBOL to Java/AWS Native Services

Replatforming retains COBOL source code but replaces the execution environment. AWS Mainframe Modernization Service (where still available to existing customers) and partner tooling translate COBOL programs into managed runtime environments on AWS, replacing JCL schedulers with Amazon EventBridge, IMS or VSAM flat files with Amazon S3, and CICS transaction management with containerized microservices on Amazon ECS or EKS.

Refactor (Re-architect) — Full Modernization

Full refactoring converts COBOL source code to Java Spring Boot or .NET using automated tooling such as AWS Blu Age or Astadia FastTrack, replaces DB2 databases with Amazon Aurora PostgreSQL using AWS DMS and the AWS Schema Conversion Tool (SCT), migrates VSAM files to structured data in Aurora or S3 with AWS Glue ETL pipelines, and replaces JCL batch job streams with AWS Step Functions state machine workflows triggered by Amazon EventBridge.

This strategy delivers the maximum ROI but requires the longest timeline and the most rigorous testing regime.

Replace — Retire Mainframe Apps for SaaS Alternatives

A significant percentage of mainframe workloads — particularly HR, general ledger, and basic reporting — can be retired in favour of commercial SaaS solutions (Workday, SAP S/4HANA Cloud, Salesforce). The mainframe application is decommissioned; data is migrated to the SaaS platform via AWS DMS or custom ETL pipelines. This approach requires no COBOL expertise at all and is the fastest path to mainframe decommissioning for applicable workloads.

AWS Mainframe Modernization Services — What AWS Offers

AWS Transform for Mainframe — The 2026 Flagship Service

AWS Transform (aws.amazon.com/transform/mainframe) is AWS’s current-generation, agentic AI-powered mainframe modernization service, announced at AWS re:Invent 2025. It supersedes the earlier AWS Mainframe Modernization Service managed runtime (which closed to new customers on November 7, 2025) and represents a qualitative leap in automation capability.

Key capabilities of AWS Transform for mainframe:

- Agentic AI code analysis: Automatically analyzes COBOL programs, JCL job streams, and data structures to generate dependency maps, business logic documentation, and modernization plans — directly addressing the “undocumented COBOL” problem

- Accelerated conversion velocity: Demonstrated migration of 1.5 million lines of code per month at 30% lower project costs versus traditional manual approaches

- Composable architecture: Enables modular, workload-by-workload modernization rather than monolithic all-or-nothing programmes

- Built on 19 years of AWS migration experience, with patterns drawn from hundreds of enterprise mainframe migration engagements

AWS Blu Age — Automated COBOL to Java Refactoring

AWS Blu Age performs fully automated source code transformation of COBOL programs (including CICS, IMS DC, Natural/ADABAS, and PL/I) into Java Spring Boot microservices. Key architectural characteristics:

- Produces 100% automated initial conversion — no manual COBOL-to-Java rewriting required

- Output is idiomatic Java Spring Boot code, compatible with standard Java CI/CD pipelines and maintainable by Java developers without COBOL knowledge

- Preserves business logic fidelity — the transformed Java code produces bit-identical outputs to the original COBOL for regression testing

- Supports COBOL dialect coverage including IBM Enterprise COBOL, ACUCOBOL, MicroFocus COBOL, and Fujitsu COBOL variants

- Integrated with AWS Mainframe Modernization workbench IDE for analysis and transformation management

- Monthly minor version releases; major version releases for impactful dependency changes

Important 2025/2026 note: The AWS Mainframe Modernization Service Managed Runtime Environment (the replatforming path using Micro Focus on AWS) is no longer accepting new customers as of November 7, 2025. AWS Transform is the recommended path for new engagements. Existing Managed Runtime customers continue to receive support.

Micro Focus (OpenText) on AWS — Replatforming Option

For organisations selecting the rehost or replatform path, Micro Focus Enterprise Server (rebranded as OpenText COBOL following OpenText’s acquisition) provides COBOL and JCL runtime emulation on Amazon EC2. This approach:

- Requires zero COBOL source code modification

- Runs COBOL programs natively on Linux EC2 instances

- Supports CICS emulation, JES2/JES3-compatible job scheduler, and VSAM abstraction

- Functions as a validated intermediate derisking step before full refactoring

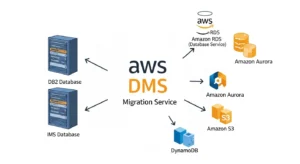

AWS DMS for Migrating DB2 and IMS Databases

AWS Database Migration Service (DMS) supports heterogeneous migration from IBM DB2 LUW and DB2 for z/OS to Amazon Aurora PostgreSQL and Amazon RDS for PostgreSQL. The workflow combines:

- AWS Schema Conversion Tool (SCT): Converts DB2 DDL, stored procedures, functions, and triggers to Aurora PostgreSQL-compatible equivalents, flagging unconvertible elements for manual review

- AWS DMS full load: Initial bulk data transfer from DB2 to Aurora

- AWS DMS CDC (Change Data Capture): Continuous replication of DB2 transaction log changes to Aurora during parallel-run, enabling near-zero-cutover-downtime migrations

For VSAM (Virtual Storage Access Method) files — the mainframe’s native indexed sequential file system — the migration path depends on access patterns:

- Keyed VSAM (KSDS): → Amazon Aurora (relational row structure) or DynamoDB (key-value with complex access patterns)

- Sequential VSAM (ESDS/RRDS): → Amazon S3 (flat file or Parquet format) with AWS Glue ETL for batch processing

AWS Step Functions for JCL Batch Modernization

JCL (Job Control Language) batch job streams — the backbone of mainframe overnight processing — are replaced in the AWS target architecture by AWS Step Functions state machine workflows:

- JCL EXEC steps → AWS Lambda function invocations or ECS Fargate task executions

- JCL DD statements (data definition) → S3 object references or DynamoDB table references

- JES2/JES3 job scheduling → Amazon EventBridge scheduled rules (cron expressions) triggering Step Functions state machine executions

- Mainframe SORT utilities → AWS Glue Spark jobs or Lambda-based sort operations

- Conditional JCL (COND, IF/THEN/ELSE) → Step Functions Choice states

AWS provides a published pattern for this JCL-to-Step-Functions mapping, enabling systematic automated conversion of JCL batch streams.

Step-by-Step Process: How to Migrate Mainframe to AWS

Visual suggestion: Six-phase Gantt chart showing Phase 1 (Weeks 1–6) through Phase 6 (Months 18–24+), with parallel tracks for database migration, application migration, testing, and infrastructure provisioning.

Phase 1 — Discovery & Assessment (Weeks 1–6)

The discovery phase is where most mainframe migration programmes succeed or fail. Undocumented dependencies between COBOL programs, CICS transactions, IMS segments, and DB2 tables are the primary cause of go-live failures. A rigorous discovery must cover:

Application Portfolio Cataloguing:

- Enumerate all COBOL source modules (.cob, .cbl, .cpy), JCL jobs, PROC libraries, and CICS transaction definitions

- Use AWS Transform’s agentic AI code analysis to parse source code and generate dependency graphs — even for programs with no surviving documentation

- Map CICS transactional applications, identifying BMS (Basic Mapping Support) screen definitions and COMMAREA data structures

- Identify IMS DBDs (Database Definitions) and PSBs (Program Specification Blocks) for IMS DC applications

Database and File Store Inventory:

- Enumerate DB2 schemas, tablespaces, stogroups, and row-level security rules

- Catalogue all VSAM clusters (KSDS, ESDS, RRDS) with volume/record size data

- Use AWS DMS Fleet Advisor to automatically discover database instances, assess schema complexity, and estimate Aurora conversion effort

Dependency Mapping:

- Identify all inter-programme CALLs and LINK/XCTL chains

- Map MQ Series message flows (if present) to Amazon MQ or Amazon SQS targets

- Document all batch job dependencies and predecessor/successor chains in the JES spool

Output: Application inventory workbook, dependency heat map, per-application strategy recommendation (rehost/replatform/refactor/replace), and Phase 2 architecture blueprint.

Phase 2 — Design & Architecture Planning (Weeks 6–12)

Target State Architecture Design:

- Define AWS account structure (use AWS Control Tower multi-account model: production, non-production, security accounts)

- Design Amazon VPC topology: private subnets for Aurora instances, EC2 application tier, and Lambda functions; VPN or AWS Direct Connect for hybrid connectivity during parallel-run

- Select EC2 instance families: m7g (Graviton3) for Java/Spring Boot application tier; r7g for in-memory caching (ElastiCache); db.r7g for Aurora PostgreSQL

- Define Amazon Aurora cluster configuration: Multi-AZ deployment, read replicas for reporting workloads, Aurora Serverless v2 for variable-load batch scenarios

- Design VSAM-to-S3 data lake architecture with AWS Glue catalog for batch ETL consumers

- Define IAM role hierarchy and AWS Organizations Service Control Policies (SCPs) equivalent to z/OS RACF access controls

Data Migration Architecture:

- COBOL COPY book structures → AWS Glue schema registry

- DB2 → Aurora PostgreSQL via AWS SCT + DMS CDC

- IMS → DynamoDB (for hierarchical data patterns) or Aurora (for relational restructuring)

- MQ Series → Amazon MQ for AmazonMQ (ActiveMQ or RabbitMQ protocol) or Amazon SQS/SNS

Phase 3 — Proof of Concept (POC) Migration (Months 2–4)

Select 1–2 COBOL batch programs with well-understood business logic and low transaction criticality. Ideal POC candidates: a month-end reporting batch, a daily flat-file feed, or a reference data maintenance CICS screen.

Execute the full AWS Blu Age (or AWS Transform) COBOL-to-Java conversion pipeline on the selected programs. Validate:

- Functional equivalence: Compare output files line-by-line between mainframe production output and AWS-generated output for identical input data

- Performance benchmarking: Measure batch execution time on target EC2 instance types vs. mainframe elapsed time

- DB2 query translation: Validate that AWS SCT-converted SQL produces identical result sets on Aurora as the original DB2 queries

Document all conversion exceptions requiring manual remediation. This defect density data is essential for projecting the effort required in full-scale execution.

Phase 4 — Full Migration Execution (Months 3–18)

Organise the application portfolio into migration waves, sequenced by risk and business impact:

| Wave | Application Type | Strategy | Duration |

| Wave 0 | Non-production environments | Rehost (emulation) | Month 1–2 |

| Wave 1 | Non-critical batch jobs (reporting, extracts) | Refactor to Lambda/Step Functions | Month 3–6 |

| Wave 2 | Reference data management, admin CICS screens | Refactor via Blu Age/Transform | Month 5–9 |

| Wave 3 | Core transactional CICS/IMS applications | Refactor + DB2 to Aurora | Month 8–15 |

| Wave 4 | Mission-critical OLTP (core banking, policy admin) | Refactor, final parallel-run | Month 12–18 |

During Waves 3–4, run the mainframe and AWS environments in parallel — both processing identical input transactions — with automated output comparison to detect business logic discrepancies before cutover.

Phase 5 — Testing & Validation (Ongoing, Intensive in Months 12–18)

Mainframe migration testing is categorically different from standard software QA. The acceptance criterion is output fidelity — the AWS system must produce the same computational results as the mainframe for every test case, including edge cases in legacy COBOL arithmetic (COMP-3 packed decimal, fixed-point rounding) that have historically caused production defects in naive refactoring projects.

Testing Regime:

- Unit testing: Each converted COBOL program has an AWS Blu Age-generated JUnit test suite with mainframe-captured input/output test cases

- Integration testing: End-to-end CICS transaction flows and JCL job streams executed in staging against Aurora

- Load & stress testing: Apache JMeter or AWS Distributed Load Testing solution replicating peak MIPS utilisation on the mainframe against target EC2 tier

- Regression testing: Automated comparison of AWS batch output against archived mainframe output for 6–12 months of historical job runs

- Security testing: AWS Inspector assessment of EC2 instances; IAM Access Analyzer for privilege review; Detective/GuardDuty activation

Phase 6 — Go Live & Decommission (Month 18–24)

Production Cutover Playbook:

- Freeze mainframe schema changes 72 hours before cutover (DB2 DDL freeze)

- Final AWS DMS CDC sync — confirm replication lag < 1 second

- Put mainframe DB2 in read-only mode; promote Aurora as primary

- Execute Route 53 (or equivalent DNS) switchover for CICS web service endpoints

- Scale AWS EC2 Auto Scaling group to peak capacity

- Execute first AWS Step Functions batch run on production schedule

- Monitor Aurora performance insights, CloudWatch metrics, and X-Ray traces for first 72 hours

- Keep mainframe warm (not decommissioned) for 30-day rollback window

Mainframe Decommission Checklist:

- All DB2 foreign keys and triggers validated on Aurora for 30 days

- All JCL batch jobs producing confirmed equivalent AWS Step Functions output

- VSAM data archived to S3 Glacier Deep Archive (compliance retention)

- IBM software licences formally cancelled (notify IBM 30–90 days in advance per contract)

- Hardware return or disposal completed per IBM contract terms

- z/OS system logs archived to S3 for regulatory retention period

Mainframe to AWS Cost Analysis — What to Budget

Visual suggestion: Three-column TCO bar chart — Mainframe 5-Year TCO vs. AWS On-Demand vs. AWS Reserved/Savings Plans.

| Cost Category | Mainframe (Annual) | AWS Equivalent (Annual) | Savings % |

| Hardware (lease/depreciation) | $2.0M–$8.0M | $0 (eliminated) | 100% |

| IBM z/OS software licensing (MIPS-based) | $3.0M–$15.0M | $0 (eliminated) | 100% |

| ISV middleware (CICS, IMS, DB2) | $1.0M–$4.0M | Aurora: $120k–$400k; Lambda: $20k–$80k | 85–95% |

| Data centre (power, cooling, space) | $500k–$2.0M | $0 (eliminated) | 100% |

| Mainframe operations staffing | $800k–$2.5M | AWS DevOps staffing | 40–60% |

| AWS EC2 + Aurora + S3 | $0 | $300k–$1.5M | — |

| Total 5-Year TCO | $35M–$155M | $3.5M–$20M | 60–90% |

Current Mainframe Operating Costs (MIPS Pricing)

The MIPS-to-cost relationship is non-linear. IBM’s tiered model means the first MSUs in a LPAR (Logical Partition) are the most expensive per unit. An organisation running a 5,000-MIPS workload on a z16 system could be paying anywhere from $5M–$10M annually in total software licensing and support — before hardware and facilities.

Migration Project Costs

| Phase | Effort/Cost (Small, <2M COBOL LOC) | Effort/Cost (Large, >10M COBOL LOC) |

| Discovery & Assessment | $150k–$400k | $500k–$1.5M |

| Architecture Design | $100k–$250k | $300k–$800k |

| COBOL Conversion (AWS Transform/Blu Age) | $400k–$1.2M | $2.0M–$6.0M |

| DB2/VSAM Data Migration | $150k–$500k | $800k–$2.5M |

| Testing & Validation | $200k–$600k | $1.0M–$3.0M |

| Go Live & Stabilisation | $100k–$250k | $400k–$1.0M |

| Total Migration Project | $1.1M–$3.2M | $5.0M–$14.8M |

Note: Kyndryl’s 2025 survey reported the average modernization project cost dropped to $7.2M in 2025 from $9.1M in 2024, with ROI more than doubling year-over-year as tooling maturity and automation (especially AWS Transform) reduces manual effort.

ROI Timeline — When Do You Break Even?

For a mid-market organisation running 5,000 MIPS with a $12M/year mainframe TCO migrating to AWS at a project cost of $4M:

- Year 1: $4M project cost; $8M mainframe savings = net savings $4M

- Year 2: $400k AWS optimisation; $12M savings = $11.6M net savings

- Break-even: Typically Month 8–14 for organisations >3,000 MIPS

Common Challenges in Mainframe to AWS Migration

COBOL Code That No One Understands Anymore

The most dangerous mainframe migration scenario is the “orphan COBOL program” — a 40,000-line batch job written in 1978 that no one on the current team has ever opened. AWS Transform’s agentic AI specifically targets this scenario: it parses COBOL source, generates natural-language documentation of business logic, and produces dependency graphs that reconstruct institutional knowledge lost to retirement. For organisations that cannot locate COBOL source code at all, the only option is binary rehosting (Micro Focus/OpenText emulation) as a first step, buying time to reverse-engineer business logic.

Undocumented Dependencies & Spaghetti Code

CICS applications built over decades typically contain dynamic EXEC CICS LINK calls resolved at runtime, making static dependency analysis incomplete. Address this with:

- AWS Transform agentic analysis as the primary discovery tool

- Dynamic call tracing using IBM Fault Analyzer or equivalent tools in a QA environment

- Deliberately conservative wave sequencing — never migrate a CICS application before all its downstream CALLED programs are confirmed migrated or rehosted

DB2 to Aurora PostgreSQL Schema Conversion Issues

AWS SCT converts the majority of DB2 DDL automatically, but specific IBM extensions require manual remediation:

- DB2 CURRENT TIMESTAMP special registers → NOW() in PostgreSQL (trivial)

- DB2 FETCH FIRST n ROWS ONLY → LIMIT n in PostgreSQL (trivial)

- DB2 Recursive SQL (CTEs with WITH RECURSIVE) → PostgreSQL native CTE support (compatible)

- DB2 Label-Based Access Control (LBAC) → Aurora row-level security (requires manual policy mapping)

- DB2 ARRAY, XML, DECFLOAT data types → Require data type mapping and application-layer changes

- Stored procedures with dynamic SQL and cursors → Often requires manual rewriting; AWS SCT flags these explicitly

Batch Processing Modernization Complexity

JCL batch streams that run overnight on mainframes frequently contain hundreds of inter-dependent steps with conditional logic encoded in COND parameters and RETURN CODE checks. The cognitive challenge is that JCL is not a programming language — it is a resource allocation and job sequencing syntax with execution semantics specific to JES2/JES3. AWS Step Functions provides the correct semantic model (state machines, choice states, error handling, parallel execution), but the translation requires systematic analysis of each JCL step. AWS has published prescriptive guidance for this mapping, and AWS Transform automates a significant portion of it.

Organizational Resistance to Change

Beyond the technical challenges, mainframe migration programmes face structural organizational resistance: mainframe operations teams whose roles are threatened, business owners who have justified decades of mainframe investment and are reluctant to acknowledge its limitations, and risk-averse governance committees who confuse “familiar” with “safe.” Address this through a formal organizational change management programme running in parallel with the technical workstream — executive sponsorship, RACI clarity, and skills transition planning for mainframe staff retraining into AWS DevOps roles.

Automated Mainframe Migration Tools on AWS

| Tool | Provider | Approach | COBOL Support | Automation Level | Best For |

| AWS Transform | AWS (native) | Agentic AI analysis + conversion | COBOL, JCL, Natural, PL/I | Very High | New engagements from 2025 onward; largest code bases |

| AWS Blu Age | AWS (native) | Automated COBOL → Java Spring | IBM Enterprise COBOL, ACUCOBOL, MicroFocus, Fujitsu | 100% automated initial conversion | Organisations wanting Java Spring target |

| Astadia FastTrack | Astadia (Amdocs) | Automated refactoring factory | COBOL, Assembler, JCL, Natural | High (reduces project duration by up to 90%) | National retailers, publishers, government agencies |

| CloudFrame | CloudFrame | COBOL modernization platform | IBM COBOL | High | Banking and financial services |

| Micro Focus/OpenText Enterprise Server | OpenText | COBOL runtime emulation | IBM Enterprise COBOL | N/A (no conversion — emulation) | Rehost path; interim derisking |

Astadia FastTrack — The Migration Factory Model

Astadia’s FastTrack platform (now part of Amdocs) operates as a “migration factory” — a standardised, repeatable pipeline that parallelises conversion of thousands of COBOL programs simultaneously. Its DataTurn component automates migration of VSAM, IMS, and DB2 data stores to relational databases. FastTrack is available on the AWS Marketplace and has been deployed for national retailers, global publishing organisations, and government agencies. The platform claims to reduce typical migration project durations by up to 90%.

Automated vs. Manual Migration Comparison

| Dimension | Fully Automated (AWS Transform / Blu Age) | Manual Refactoring |

| Initial conversion speed | 1.5M+ lines of code/month | 5,000–15,000 lines/developer/month |

| Output code quality | Functionally correct; may require readability improvements | Developer-idiomatic; higher maintainability from day 1 |

| Handling of undocumented code | AI-generated documentation + best-effort conversion | Requires human reverse engineering |

| Cost per KLOC | $50–$150 | $500–$2,000 |

| Risk of business logic loss | Low (bit-identical testing regime) | Medium (human error in translation) |

| Best for | Large code volumes (>1M LOC); time-pressured programmes | Small, highly complex, strategic code components |

Real-World Mainframe to AWS Migration Case Studies

Case Study 1 — Financial Services Firm (NTT DATA)

A financial services firm with extensive mainframe infrastructure worked with NTT DATA to migrate front-office applications to AWS. The engagement reduced data centre costs and mainframe MIPS consumption by one-third, freeing capital for cloud-native development. The migration adopted DevOps practices — CI/CD pipelines, automated testing, and Infrastructure as Code via Terraform — that the mainframe environment had structurally prevented.

Case Study 2 — Government Agency Mainframe Modernization with AWS GovCloud

AWS Mainframe Modernization is available in AWS GovCloud (US) regions, supporting FedRAMP High, DoD IL2–IL5, HIPAA, and FISMA compliance frameworks. This makes it the only hyperscaler with a validated, FedRAMP-authorised mainframe modernization toolchain — directly addressing the federal government’s $2.4 billion annual legacy mainframe spend. US federal agencies using AWS GovCloud can leverage AWS Transform for agentic AI-powered code analysis while maintaining data residency and access control requirements mandated by FISMA and ITAR.

Case Study 3 — Consumer Goods Company (NTT DATA / AWS)

A leading consumer goods company partnered with NTT DATA to plan and execute a mainframe migration to AWS Mainframe Modernization. Post-migration, the company adopted full DevOps practices — including automated regression testing and CI/CD pipelines — that had been impossible in the mainframe environment. Operational agility and deployment frequency improved dramatically, directly impacting time-to-market for new product launches.

Case Study 4 — State Farm Insurance (Re:Invent Showcase)

State Farm presented their mainframe modernization programme at AWS re:Invent 2025, describing a “think big, start small, scale fast” methodology. They deployed AWS Lambda Step Functions as a consistent, repeatable deployment engine for modernized applications, and used wave-based migration to progressively migrate insurance policy administration systems — among the most complex COBOL application landscapes in the industry. The programme demonstrated that gradual, factory-model modernization can be applied successfully to very large COBOL estates without disruptive big-bang cutover events.

Mainframe to AWS Migration — Risk Mitigation Checklist

- Complete COBOL source inventory confirmed before any conversion begins — binary-only programs must be identified and rehosted, not converted

- AWS Transform / Blu Age POC completed on representative programs before full programme commitment

- DB2 schema complexity assessed using AWS SCT — all “action required” items assigned to developers before wave planning

- VSAM data migration strategy defined per cluster type (KSDS → Aurora; ESDS → S3) with record format documentation

- JCL batch dependency chain documented using dependency analysis tooling — no JCL step migrated without confirmed prerequisite migration

- CICS transaction COMMAREA structures catalogued and mapped to REST/JSON API contracts for the Java target

- Parallel-run environment provisioned and confirmed before Wave 3 (transactional applications) begins

- IBM software licence termination notice drafted and scheduled per contract terms (typically 30–90 days)

- Aurora performance benchmarked at 150% of peak mainframe transaction throughput before production cutover

- AWS IAM policies mapped to z/OS RACF profiles — no service principal should have broader permissions than its RACF equivalent

- CloudWatch alarms and X-Ray tracing configured for all migrated services before go-live

- Rollback plan tested — mainframe kept warm at minimal MIPS configuration for 30 days post-cutover

- Staff retraining plan in place for mainframe operations team transitioning to AWS DevOps roles

- Regulatory data retention confirmed — VSAM and DB2 archives in S3 Glacier with appropriate object lock policies

Frequently Asked Questions

Q1: How long does it take to migrate a mainframe to AWS?

Timelines vary by code volume and strategy. A simple rehost can take 3–6 months, while large-scale refactoring projects may run 18–36 months. AI-driven tools like AWS Transform can significantly accelerate conversion.

Q2: What is the cost of migrating a mainframe to AWS?

Costs typically range from $1M to $15M depending on application size and complexity. Most enterprises achieve ROI within 8–14 months through reduced infrastructure and operational costs.

Q3: Can COBOL applications run on AWS?

Yes. COBOL workloads can be rehosted using Micro Focus/OpenText on EC2 without code changes, or refactored into Java using AWS Blu Age or AWS Transform for cloud-native deployment.

Q4: What is the difference between rehosting and refactoring?

Rehosting moves applications to AWS without changing source code. Refactoring converts COBOL into modern languages like Java, eliminating mainframe dependencies and enabling cloud-native capabilities.

Q5: What happens to DB2 data during migration?

DB2 databases are migrated using AWS DMS and Schema Conversion Tool (SCT), enabling data replication and near-zero downtime during the transition to Amazon Aurora PostgreSQL.

Conclusion — The Future Is Cloud, Not Mainframe

Migrating a mainframe to AWS is no longer just a modernization initiative — it is a strategic move to control costs, reduce operational risk, and secure long-term technical sustainability. Organizations that act decisively are not simply upgrading infrastructure; they are future-proofing their application estate and unlocking cloud-native innovation.

If your modernization roadmap also includes virtualized environments, you may find additional insights in our guide on VMware to AWS migration: https://go-cloud.io/vmware-to-aws-migration/, where we break down strategy, execution models, and cost considerations in detail.

At GoCloud, we help enterprises design structured, low-risk migration strategies — from legacy mainframes to VMware workloads — ensuring measurable ROI, controlled timelines, and long-term cloud optimization.

Key takeaways for enterprise architects and IT directors:

- Start with AWS Transform for code discovery and documentation — even if your programme won’t begin for 12 months, the agentic AI analysis pays for itself immediately by reconstructing institutional knowledge

- Sequence by risk, not by size — migrate non-critical batch jobs first; prove the pattern; build organisational confidence before touching core transactional systems

- Never migrate compute before data — DB2 to Aurora CDC replication must be validated and stable before COBOL application cutover

- Keep the mainframe warm for 30 days post-cutover — a tested rollback plan is not optional

- Plan staff transition in parallel — the mainframe operations team that made today possible is the DevOps team that will run AWS tomorrow, with the right retraining investment

As agentic AI capabilities embedded in AWS Transform continue to mature through 2026, the automation ratio in mainframe modernization programmes will approach levels that make the technical barrier to migration negligible compared to the organizational one. The programmes that succeed are those that solve the people problem as rigorously as the technology problem.